She’s almost 70, spend all day watching q-anon style of videos (but in Spanish) and every day she’s anguished about something new, last week was asking us to start digging a nuclear shelter because Russia was dropped a nuclear bomb over Ukraine. Before that she was begging us to install reinforced doors because the indigenous population were about to invade the cities and kill everyone with poisonous arrows. I have access to her YouTube account and I’m trying to unsubscribe and report the videos, but the reccomended videos keep feeding her more crazy shit.

At this point I would set up a new account for her - I’ve found Youtube’s algorithm to be very… persistent.

Unfortunately, it’s linked to the account she uses for her job.

You can make “brand accounts” on YouTube that are a completely different profile from the default account. She probably won’t notice if you make one and switch her to it.

You’ll probably want to spend some time using it for yourself secretly to curate the kind of non-radical content she’ll want to see, and also set an identical profile picture on it so she doesn’t notice. I would spend at least a week “breaking it in.”

But once you’ve done that, you can probably switch to the brand account without logging her out of her Google account.

I love how we now have to monitor the content the generation that told us “Don’t believe everything you see on the internet.” watches like we would for children.

We can thank all that tetraethyllead gas that was pumping lead into the air from the 20s to the 70s. Everyone got a nice healthy dose of lead while they were young. Made 'em stupid.

OP’s mom breathed nearly 20 years worth of polluted lead air straight from birth, and OP’s grandmother had been breathing it for 33 years up until OP’s mom was born. Probably not great for early development.

Shoutout to lead paint and asbestos maybe?

Asbestos is respiratory, nothing to do with brain development or damage.

Hence the maybe. :)

Thanks!

HOW DO I PRINT THE FACE BOOK ?

She’s going to seek this stuff out and the algorithm will keep feeding her. This isn’t just a YouTube problem, this is also a mom problem.

Delete watch history, find and watch nice channels and her other interests, log in to the account on a spare browser on your own phone periodically to make sure there’s no repeat of what happened.

deleted by creator

I’m a bit disturbed how people’s beliefs are literally shaped by an algorithm. Now I’m scared to watch Youtube because I might be inadvertently watching propaganda.

deleted by creator

It’s even worse than “a lot easier”. Ever since the advances in ML went public, with things like Midjourney and ChatGPT, I’ve realized that the ML models are way way better at doing their thing that I’ve though.

Midjourney model’s purpose is so receive text, and give out an picture. And it’s really good at that, even though the dataset wasn’t really that large. Same with ChatGPT.

Now, Meta has (EDIT: just a speculation, but I’m 95% sure they do) a model which receives all data they have about the user (which is A LOT), and returns what post to show to him and in what order, to maximize his time on Facebook. And it was trained for years on a live dataset of 3 billion people interacting daily with the site. That’s a wet dream for any ML model. Imagine what it would be capable of even if it was only as good as ChatGPT at doing it’s task - and it had uncomparably better dataset and learning opportunities.

I’m really worried for the future in this regard, because it’s only a matter of time when someone with power decides that the model should not only keep people on the platform, but also to make them vote for X. And there is nothing you can do to defend against it, other than never interacting with anything with curated content, such as Google search, YT or anything Meta - because even if you know that there’s a model trying to manipulate with you, the model knows - there’s a lot of people like that. And he’s already learning and trying how to manipulate even with people like that. After all, it has 3 billion people as test subjects.

That’s why I’m extremely focused on privacy and about my data - not that I have something to hide, but I take a really really great issue with someone using such data to train models like that.

Just to let you know, meta has an open source model, llama, and it’s basically state of the art for open source community, but it falls short of chatgpt4.

The nice thing about the llama branches (vicuna and wizardlm) is that you can run them locally with about 80% of chatgpt3.5 efficiency, so no one is tracking your searches/conversations.

I was using ChatGPT only as an example - I don’t think that making a chatbot AI is their focus, so it’s understandable that they are not as good at it - plus, I’d guess that making a coherent text is a lot harder than deciding what kind of video or posts to put in someones feed.

And that AI, the one that takes users data as input and outputs what to show him in his feed to keep him glued to Facebook for as much as possible, I’m almost sure is one of the best ML we have on the world right now - simply because of the user base and time it has to learn on, and the sheer amount of data Meta has about users. But that’s also something that will never get public, naturally.

My personal opinion is that it’s one of the first large cases of misalignment in ML models. I’m 90% certain that Google and other platforms have been for years already using ML models design for user history and data they have about him as an input, and what videos should they offer to him as an ouput, with the goal to maximize the time he spends watching videos (or on Facebook, etc).

And the models eventually found out that if you radicalize someone, isolate them into a conspiracy that will make him an outsider or a nutjob, and then provide a safe space and an echo-chamber on the platform, be it both facebook or youtube, the will eventually start spending most of the time there.

I think this subject was touched-upon in the Social Dillema movie, but given what is happening in the world and how it seems that the conspiracies and desinformations are getting more and more common and people more radicalized, I’m almost certain that the algorithms are to blame.

If youtube “Algorithm” is optimizing for watchtime then the most optimal solution is to make people addicted to youtube.

The most scary thing I think is to optimize the reward is not to recommend a good video but to reprogram a human to watch as much as possible

I think that making someone addicted to youtube would be harder, than simply slowly radicalizing them into a shunned echo chamber about a conspiracy theory. Because if you try to make someone addicted to youtube, they will still have an alternative in the real world, friends and families to return to.

But if you radicalize them into something that will make them seem like a nutjob, you don’t have to compete with their surroundings - the only place where they understand them is on the youtube.

100% they’re using ML, and 100% it found a strategy they didn’t anticipate

The scariest part of it, though, is their willingness to continue using it despite the obvious consequences.

I think misalignment is not only likely to happen (for an eventual AGI), but likely to be embraced by the entities deploying them because the consequences may not impact them. Misalignment is relative

fuck, this is dark and almost awesome but not in a good way. I was thinking the fascist funnel was something of a deliberate thing, but may be these engagement algorithms have more to do with it than large shadow actors putting the funnels into place. Then there’s the folks who will create any sort of content to game the algorithm and you’ve got a perfect trifecta of radicalization

Fascist movements and cult leaders long ago figured out the secret to engagement: keep people feeling threatened, play on their insecurities, blame others for all the problems in people’s lives, use fear and hatred to cut them off from people outside the movement, make them feel like they have found a bunch of new friends, etc. Machine learning systems for optimizing engagement are dealing with the same human psychology, so they discover the same tricks to maximize engagement. Naturally, this leads to YouTube recommendations directing users towards fascist and cult content.

That’s interesting. That it’s almost a coincidence that fascists and engagement algorithms have similar methods to suck people in.

You watch this one thing out of curiosity, morbid curiosity, or by accident, and at the slightest poke the goddamned mindless algorithm starts throwing this shit at you.

The algorithm is “weaponized” for who screams the loudest, and I truly believe it started due to myopic incompetence/greed, not political malice. Which doesn’t make it any better, as people don’t know how to take care of themselves from this bombardment, but the corporations like to pretend that ~~they~~ people can, so they wash their hands for as long as they are able.

Then on top of this, the algorithm has been further weaponized by even more malicious actors who have figured out how to game the system.

That’s how toxic meatheads like infowars and joe rogan get a huge bullhorn that reaches millions. “Huh… DMT experiences… sounds interesting”, the format is entertaining… and before you know it, you are listening to anti-vax and qanon excrement, your mind starts to normalize the most outlandish things.EDIT: a word, for clarity

Whenever I end up watching something from a bad channel I always delete it from my watch history, in case that affects my front page too.

Huh, I tried that. Still got recommended incel-videos for months after watching a moron “discuss” the Captain Marvel movie. Eventually went through and clicked “dont recommend this” on anything that showed on my frontpage, that helped.

I do that, too.

However I’m convinced that Youtube still has a “suggest list” bound to IP addresses. Quite often I’ll have videos that other people in my household have watched suggested to me. While some of it can be explained by similar interests, but it happens a suspiciously often.

I can confirm the IP-based suggestions!

My hubs and I watch very different things. Him: photography equipment reviews, photography how to’s, and old, OLD movies. Me: Pathfinder 2e, quantum field theory/mechanics and Dip Your Car.

Yet we both see stuff in the other’s Suggestions of videos the other recently watched. There’s ZERO chance based on my watch history that without IP-based suggestions YT is going to think I’m interested in watching a Hasselblad DX2 unboxing. Same with him getting PBS Space Time’s suggestions.

My normal YT algorithm was ok, but shorts tries to pull me to the alt-right.

I had to block many channels to get a sane shorts algorythm.“Do not recommend channel” really helps

It really does help. I’ve been heavily policing my Youtube feed for years and I can easily see when they make big changes to the algorithm because it tries to force feed me polarizing or lowest common denominator content. Shorts are incredibly quick to smother mebin rage bait and if you so much as linger on one of those videos too long, you’re getting a cascade of alt-right bullshit shortly after.

Using Piped/Invidious/NewPipe/insert your preferred alternative frontend or patched client here (Youtube legal threats are empty, these are still operational) helps even more to show you only the content you have opted in to.

Reason and critical thinking is all the more important in this day and age. It’s just no longer taught in schools. Some simple key skills like noticing fallacies or analogous reasoning, and you will find that your view on life is far more grounded and harder to shift

I think it’s worth pointing out “no longer” is not a fair assessment since this is regularly an issue with older Americans.

I’m inclined to believe it was never taught in schools, and is probably more likely to be a subject teachers are increasingly likely to want to teach (i.e. if politics didn’t enter the classroom it would already be being taugh, and might be in some districts).

The older generations were given catered news their entire lives, only in the last few decades have they had to face a ton of potentially insidious information. The younger generations have had to grow up with it.

A good example is that old people regularly click malicious advertising, fall for scams, etc, they’re generally not good at applying critical thinking to a computer, where as younger people (typically though I hear this is regressing some with smartphones) know about this stuff and are used to validating their information (or at least have a better “feel” for what’s fishy).

Just be aware that we can ALL be manipulated, the only difference is the method. Right now, most manipulation is on a large scale. This means they focus on what works best for the masses. Unfortunately, modern advances in AI mean that automating custom manipulation is getting a lot easier. That brings us back into the firing line.

I’m personally an Aspie with a scientific background. This makes me fairly immune to a lot of manipulation tactics in widespread use. My mind doesn’t react how they expect, and so it doesn’t achieve the intended result. I do know however, that my own pressure points are likely particularly vulnerable. I’ve not had the practice resisting having them pressed.

A solid grounding gives you a good reference, but no more. As individuals, it is down to us to use that reference to resist undue manipulation.

deleted by creator

The only way you can’t be manipulated is if you are dead. All human interaction is manipulation of some sort of another. If you think your immune, your likely very vulnerable. If it’s delivered in the correct way, since your not bothering to guard against it.

An interesting factoid I’ve ran across a few times. Smart people are far easier to rope into cults than stupid people. The stupid, have experienced that sort of manipulation before, and so have some defenses against it. The smart people assume they wouldn’t be caught up in something like that, and so drop their guard.

In the words of Mad-eye Moody “Constant vigilance!”

imagine if they taught critical media literacy in schools. of course that would only be critical media literacy with an american propaganda backdoor but still

Texas basically banned critical thinking skills in the school system

I mean, you probably are, especially if it’s explicitly political. All I can recommend is CONSTANT VIGILANCE!

YouTube’s entire business is propaganda: Ads.

What ad? Glances at uBlock Origin

Lately the number of ads on YouTube has increased by an order of magnitude. What they managed to accomplish was driving me away.

I watch a lot of history, science, philosophy, stand up, jam bands and happy uplifting content… I am very much so feeding my mind lots of goodness and love it…

At this point, any channel that I know is either bullshit or annoying af I just block. Out of sight out of mind.

Same. I have ads blocked and open YouTube directly to my subbed channels only. Rarely open the home tab or check related videos because of the amount of click bait and bs.

Ohh I just use BlockTube to block channels/ videos I don’t want to see.

Just this week I stumbled across a new YT channel that seemed to talk about some really interesting science. Almost subscribed, but something seemed fishy. Went on the channel and saw the other videos, immediately got the hell out. Conspiracies and propaganda lurk everywhere and no one is save. Mind you, I’m about to get my bachelor’s degree next year, meaning I have received proper scientific education. Yet I almost fell for it.

I have to clear out my youtube recommendations about once a week… no matter how many times I take out or report all the right-wing garbage, you can bet everything that by the end of the week there will be a Jordan Peterson or PragerU video in there. How are people who aren’t savvy to the right-wing’s little “culture war” supposed to navigate this?

You should use an extension like blocktube.

I probably should… but I have to admit that I kinda enjoy reporting them.

Thanks - I’ll certainly look into it.

I find it interesting how some people have so vastly different experience with YouTube than me. I watch a ton of videos there, literally hours every single day and basically all my recommendations are about stuff I’m interested in. I even watch occasional political videos, gun videos and police bodycam videos but it’s still not trying to force any radical stuff down my throat. Not even when I click that button which asks if I want to see content outside my typical feed.

My youtube is usually ok but the other day I googled an art exhibition on loan from the Tate Gallery, and now youtube is trying to show me Andrew Tate.

At one point I watched a few videos about marvel films and the negatives about them. One was about how captian marvel wasn’t a good hero because she was basically invincible and all powerful etc etc. I started getting more and more suggestions about how bad the new strong female leads in modern films are. Then I started getting content about politically right leaning shit. It started really innocuously and it’s hard to figure out if it’s leading you a certain way until it gets further along. It really made me think when I’m watching content from new channels. Obviously I’ve blocked/purged all channels like that and my experience is fine now.

I watch a ton of videos there, literally hours every single day and basically all my recommendations are about stuff I’m interested in.

The algorithm’s goal is to get you addicted to Youtube. It has already succeeded. For the rest of us who watch one video a day, if at all, it employs more heavy-handed strategies.

That’s a good point. They don’t care what I watch. They just want me to watch something.

The experience is different because it’s not one algorithm for everyone.

Demographics are targeted differently. If you actually get a real feed, it’s only because no one has yet paid YouTube for guiding you towards their product.

It would be an interesting experiment to set up two identical devices and then create different Google profiles for each just to watch the algorithm take them in different directions.

I don’t understand how these people can endure enough ads to be lured in by qanon. The people of that generation generally don’t know about decent adblockers.

deleted by creator

the damage that corporate social media has inflicted on our social fabric and political discourse is beyond anything we could have imagined.

This is true, but one could say the same about talk radio or television.

Talk radio or television broadcasts the same stuff to everyone. It’s damaging, absolutely. But social media literally tailors the shit to be exactly what will force someone farther down the rabbit hole. It’s actively, aggressively damaging and sends people on a downward spiral way faster while preventing them from encountering diverse viewpoints.

I agree it’s worse, but i was just thinking how there are regions where people play ONLY Fox on every public television, and if you turn on the radio it’s exclusively a right-wing propagandist ranting to tell you democrats are taking all your money to give it to black people on welfare.

Well it’s kind of true.

Uh, no. For one, Republicans spend like fuck, they just also cut taxes so they end up running up the deficit. Social welfare programs account for a tiny fraction of government budgets. The vast majority is the military and interest payments on debt.

Actually, liberal taxes pay for rural white America.

And it sucks people back in like a breadcrumbing ex when it hasn’t seen you active recently.

Yes, I agree - there have always been malevolent forces at work within the media - but before facebook started algorithmically whipping up old folks for clicks, cable TV news wasn’t quite as savage. The early days of hate-talk radio was really just Limbaugh ranting into the AM ether. Now, it’s saturated. Social media isn’t the root cause of political hatred but it gave it a bullhorn and a leg up to apparent legitimacy.

Social media is more extreme, but we can’t discount the damage Fox and people like Limbaugh or Michael Savage did.

Agreed, Ted Kaczynski was right about technology evidently.

It was just in the news that he was an MK ULTRA subject. Fucked up shit.

He was pretty good at math too

Populism and racism is as old as societies. Anciant Greece already had it. Rome fell to it. Christianism is born out of it.

Funnily enough, people always complained about how bad their society was because of this new thing. Like 5 thousand years ago already. Probably earlier even.

Which is not to say we shouldn’t do anything about it. We definitely should. But common sense won’t save us unfortunately.

In the google account privacy settings you can delete the watch and search history. You can also delete a service such as YouTube from the account, without deleting the account itself. This might help starting afresh.

I was so weirded out when I found out that you can hear ALL of your “hey Google” recordings in these settings.

Yeah, anything you send Google/Amazon/Facebook they will keep. I have been moving away from them. Protonmail for email, etc.

Log in as her on your device. Delete the history, turn off ad personalisation, unsubscribe and block dodgy stuff, like and subscribe healthier things, and this is the important part: keep coming back regularly to tell YouTube you don’t like any suggested videos that are down the qanon path/remove dodgy watched videos from her history.

Also, subscribe and interact with things she’ll like - cute pets, crafts, knitting, whatever she’s likely to watch more of. You can’t just block and report, you’ve gotta retrain the algorithm.

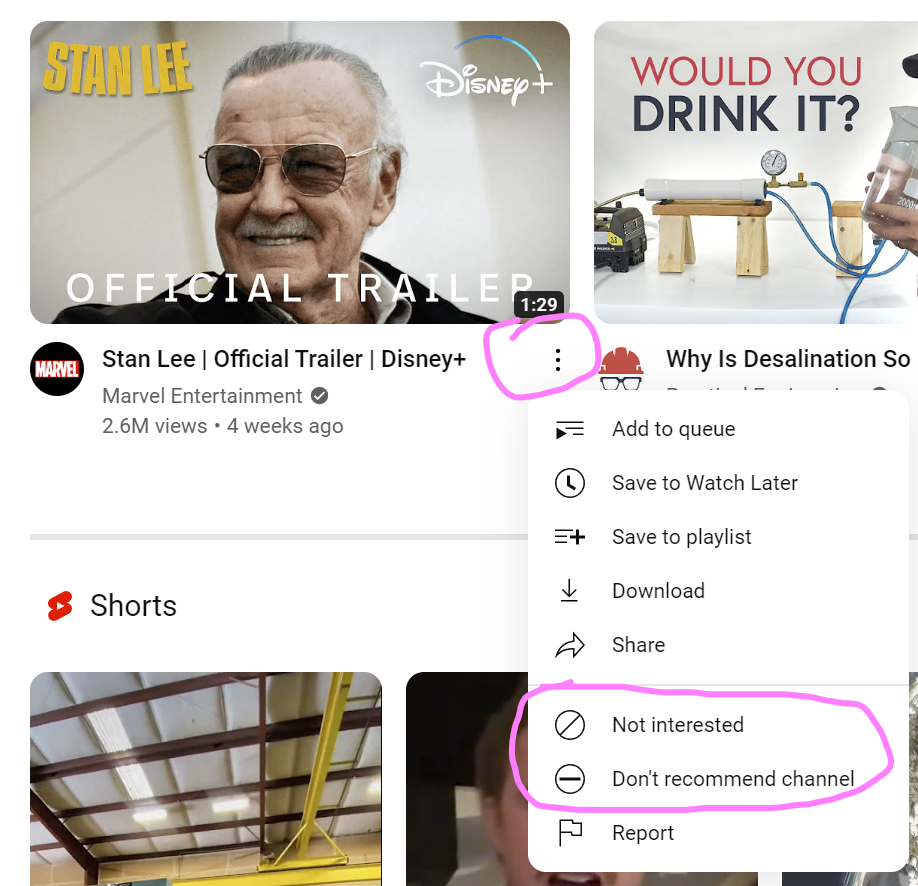

Yeah, when you go on the feed make sure to click on the 3 dots for every recommended video and “Don’t show content like this” and also “Block channel” because chances are, if they uploaded one of these stupid videos, their whole channel is full of them.

Would it help to start liking/subscribing to videos that specifically debunk those kinds of conspiracy videos? Or, at the very least, demonstrate rational concepts and critical thinking?

Probably not. This is an almost 70 year old who seems not to really think rationally in the first place. She’s easily convinced by emotional misinformation.

Probably just best to occupy her with harmless entertainment.

We recommend her a youtube channel about linguistics and she didn’t like it because the Phd in linguistics was saying that is ok for language to change. Unfortunately, it comes a time when people just want to see what already confirms their worldview, and anything that challenges that is taken as an offense.

Sorry to hear about you mom and good on you for trying to steer her away from the crazy.

You can retrain YT’s recommendations by going through the suggested videos, and clicking the ‘…’ menu on each bad one to show this menu:

(no slight against Stan, he’s just what popped up)

click the Don’t recommend channel or Not interested buttons. Do this as many times as you can. You might also want to try subscribing/watching a bunch of wholesome ones that your mum might be interested in (hobbies, crafts, travel, history, etc) to push the recommendations in a direction that will meet her interests.

Edit: mention subscribing to interesting, good videos, not just watching.

You might also want to try watching a bunch of wholesome ones that your mum might be interested (hobbies, crafts, travel, history, etc) in to push the recommendations in a direction that will meet her interests.

This is a very important part of the solution here. The algorithm adapts to new videos very quickly, so watching some things you know she’s into will definitely change the recommended videos pretty quickly!

OP can make sure this continues by logging into youtube on private mode/non chrome web browser/revanced YT app using their login and effectively remotely monitor the algorithm.

(A non chrome browser that you don’t use is best so that you keep your stuff seperate, otherwise google will assume your device is connected to the account which can be potentially messy in niche cases).

Delete all watched history. It will give her a whole new sets of videos. Like a new algorithms.

Where does she watch he YouTube videos? If it’s a computer and you’re a little techie, switch to Firefox then change the user agent to Nintendo Switch. I find that YouTube serve less crazy videos for Nintendo Switch.

This never worked for me. BUT WHAT FIXED IT WAS LISTENING TO SCREAMO METAL. APPARENTLY THE. YOURUBE KNEW I WAS A LEFTIST then. Weird but I’ve been free ever since

This comment made me laugh so hard and I don’t know why… I love it!

in my head, I read it like someone was talking, and then had to start shouting over the sound of a train passing by, then return to normal volume as it passed

Or like someone whose SCREAMO METAL SUDDENLY TURNED ON AND THEY COULDN’T HEAR themselves thinking anymore.

change the user agent to Nintendo Switch

You mad genius, you

Here is what you can do: make a bet with her on things that she think is going to happen in a certain timeframe, tell her if she’s right, then it should be easy money. I don’t like gambling, I just find it easier to convince people they might be wrong when they have to put something at stake.

A lot of these crazy YouTube cult channels have gotten fairly insidious, because they will at first lure you in with cooking, travel, and credit card tips, then they will direct you to their affiliated Qanon style channels. You have to watch out for those too.

they will at first lure you in with cooking, travel, and credit card tips

Holy crap. I had no idea. We’ve heard of a slippery slope, but this is a slippery sheer vertical cliff.

Like that toxic meathead rogan luring the curious in with DMT stories and the like, and this sounds like that particular spore has burst and spread.Rogan did not invent this, this is the modern version of Scientology’s free e-meter reading, and historical cults have always done similar things for recruitment. While you shouldn’t be paranoid and suspicious of everyone, but you should know that these things exists and watch out for them.

this is the modern version of Scientology’s free e-meter reading

I actually have a fun story about that. They once had a booth on my college campus so just for fun I let them hook up their e-meter to me. I was extremely dubious that this device did what it claimed, but just for fun to mess with it I tried as hard as I can to think calm and relaxing thoughts. To my amazement, the needle actually went down to the “not stressed” end, so I’ve gone from thinking that the e-meter is almost certainly bunk to thinking that it is merely very probably bunk.

That isn’t the funny part, though. The funny part was that the person administering the test got really concerned and said that the device wasn’t working properly and had me take the test again. I did so, and once again the needle went down to the “not stressed” end. The person administering the test then apologized profusely that the device was clearly not working and said that they nonetheless recommended that I take their classes to deal with the stress in my life. So the whole experience was absolutely hilarious, although at the same time incredibly sad because I strongly suspect that the people at the booth weren’t saying these things in order to deceive me but because they were genuinely true believers who were incapable of seeing the plain truth even when it stared them in the face.

it’s a skin galvanometer. It measures sweating directly based on the fact that sweat is electrically conductive, then interprets more sweat as more stress. this is a fallacy as the fact that stressed people tend to sweat does NOT imply that sweaty people tend to be stressed. this works to the advantage of scientologists because genuinely stressed people will measure high, but so will a lot of unstressed people or people who are only stressed by the fact that they suddenly find themselves in an experiment. False positives and true positives are much more common than true or false negatives, and also much more profitable for scientologists. When you successfully beat the test, the person administering it insisted you go again because it’s kinda rigged and they assumed that a second reading would come back with the needle pointing strongly toward “give us a bunch of money”.

this is the central fallacy behind lie detectors as well, as they measure skin galvanic response, heart rate, and other things that are correlated with stress but can have myriad other causes, then they assume that people are stressed when they lie, then they take a flying leap to the conclusion that anyone displaying symptoms of stress must be lying.

then interprets more sweat as more stress. this is a fallacy as the fact that stressed people tend to sweat does NOT imply that sweaty people tend to be stressed

It’s close enough to convince people. If there were no correlation between sweatiness and stress, the E-meter wouldn’t be a convincing recruiting tool. If it always just went to “stressed” no matter who used it or how, it wouldn’t be as convincing either. The fact that sweatiness and stress are somewhat correlated means that it can be used to bring people into the cult.

oh no one is doubting that it’s a good scam. it’s quantifiably a truly great scam, having bilked billions from a whole lot of people. but it is a scam, and the electroconductivity of your skin is not a measure of your spiritual state

"Today, we’ll learn how to make an asparagus and crabmeat omelet with mozzarella cheese. Also be sure to check out our other channels in the links right here (points to the upper left hand corner of the screen) and here (lower left hand corner). Please remember to like this video and suscribe, it really helps our channel."

it took a total of one hour of youtube autoplay for me to go from “here’s how to can your garden veggies at home” to “the (((globalists))) want to outbreed white people and force them into extinction”

My sister always mocked her and starts to fight her about how she has always been wrong before and she just get defensive and has not worked so far, except for further damage their relationship.

No, don’t mock her, that’s the last thing you should do, don’t be emotional, be calm and ask her if she want to take the easy money if she is so sure about these things, it has to be provable, objective events where there is no wiggle room, like the natives with arrows for example.

Cults will always want to isolate their perspective members from their current support groups. Don’t let them do that past the point of no return.

See if you can convince her to write down her predictions in a calendar, like “by this date X will have happened”.

You can tell her that she can use that to prove to her doubters that she was right and she called it months ago, and that people should listen to her. If somehow she’s wrong, it can be a way to show that all the things that freaked her out months ago never happened and she isn’t even thinking about them anymore because she’s freaked out about the latest issue.

Going to ask my sister to do this, it can be good for both of them.

It’s not going to work.

You can’t reason someone out of a position they didn’t reason themselves into. You have to use positive emotion to counteract the negative emotion. Literally just changing the subject to something like “Remember when we went to the beach? That was fun!” Positive emotions and memories of times before the cult brainwashing can work.

The good part about it is that once she writes her predictions down (or maybe both of them do), they can maybe move on, and talk about something other than the conspiracies.

Assuming all the predictions are wrong, especially if your mom forgot how worked up she was about them months ago but completely forgot about them after that, it can be a good way to talk about how they’re manipulating her emotions.

I think it’s sad how so many of the comments are sharing strategies about how to game the Youtube algorithm, instead of suggesting ways to avoid interacting with the algorithm at all, and learning to curate content on your own.

The algorithm doesn’t actually care that it’s promoting right-wing or crazy conspiracy content, it promotes whatever that keeps people’s eyeballs on Youtube. The fact is that this will always be the most enraging content. Using “not interested” and “block this channel” buttons doesn’t make the algorithm stop trying to advertise this content, you’re teaching it to improve its strategy to manipulate you!

The long-term strategy is to get people away from engagement algorithms. Introduce OP’s mother to a patched Youtube client that blocks ads and algorithmic feeds (Revanced has this). “Youtube with no ads!” is an easy way to convince non-technical people. Help her subscribe to safe channels and monitor what she watches.

Not everyone is willing to switch platforms that easily. You can’t always be idealistic.

That’s why I suggested Revanced with “disable recommendations” patches. It’s still Youtube and there is no new platform to learn.

Also consider - don’t sign in to YouTube. Set uBlock, and Sponsorblock, too - so when youtube does get watched, the ads and promotions get skipped.

Everyone here is missing the point - by signing in to YouTube, you give Google more power to dominate your life.

With a simple inoreader extension in Firefox, you can visit a youtube/youtuber/video page and subscribe the feed.

Search interesting channels and save them to Feedly, or Inoreader.

Pin those to Firefox, so that your feeds are always refreshed and visible.

How does revanced remove the algorithm stuff? I have been using it for a long time but never saw this feature

I don’t know for sure, because I actually like my algorithmic recommendations on YT (I’ve done a good job of carefully curating it to show me a good stuff). But if I had to guess, I’d say it removes “related videos” from its watch page, and removes the “home” tab. So it will show search results and subscriptions, but not the general algorithmic content.

There should be a patch for it that hides the “recommended” feed in the homepage. I’m not certain because I never use Youtube with an account or the official website/app, so I don’t get targeted recommendations.

How do you disable algorithmic feeds in revanced? This sounds perfect…

Ooh, you can even set the app to open automatically to the subscription feed rather than the algo driven home. The app does probably need somebody knowledgable about using the app patcher every half-dozen months to update it though.

In addition to everything everyone here said I want to add this; don’t underestimate the value in adding new benin topics to her feel. Does she like cooking, gardening, diy, art content? Find a playlist from a creator and let it auto play. The algorithm will pick it up and start to recommend that creator and others like it. You just need to “confuse” the algorithm so it starts to cater to different interests. I wish there was a way to block or mute entire subjects on their. We need to protect our parents from this mess.

Do the block and uninterested stuff but also clear her history and then search for videos you want her to watch. Cover all your bases. You can also try looking up debunking and deprogramming videos that are specifically aimed at fighting the misinformation and brainwashing.

This is a really good idea - so she begins to see the videos of people who were once where she is now.

I’ve found that if I remove things from my history, it stops suggesting related content, hopefully that helps.

That’s what I do, but it requires doing it frequently, as for OP they should start fresh with a new profile which can be under the same login via channels.

Once you have it dialed you can disable your search/watch history.

Some of my personal hobbies/interests tend to appeal to the right wing tinfoil hat fringe (outdoorsy hunting/camping/survivalist stuff, ham radio, a casual interest in guns, and I have a small libertarian streak although I’d generally call myself a liberal these days, etc.) So left unchecked the algorithm will show me some crazy stuff. It used to happen to me occasionally that while I was googling around for info and reviews on some piece of kit I’d be clicking into different forums to see people discussing them then I’d realize at some point that I was on the stormfront forums (and immediately nope the fuck out of there) usually it would be a totally normal conversation of people discussing a ham radio or gun or whatever until halfway down the page someone would drop a comment like “in my opinion, every nationalist should own one of these”

So my YouTube history has been paused for years, probably since well before trump and q anon and everything really took off, and frankly I’ve been happy with my recommendations. I haven’t looked too much into how those recommendations are determined now but it seems to mostly be based on what channels I’m subscribed to.

There is definitely more going into it though, because I get a lot of recommendations about the breed of dog I have despite not subscribing to any dog-related channels, so it’s definitely pulling from the rest of my Google search history or social media or something, or secretly keeping track of my history a little in the background somewhere, so you’ll probably have to do some pruning in the rest of her online accounts too.

Some things just completely skew your home page with just a single viewing. I am very careful with my watch history, only watching one off stuff from other sites in a private window. That seems to work well.

That was something I tried tried today, deleted the YouTube history and paused it. It didn’t had immediately effects, but hope it helps on something.

Another thing that I’d consider doing is to unpause her history a bit, put YouTube to play a few videos about other stuff (topics that she’d watch, based on her tastes, minus the q-anon junk), then pause it again. This might tell youtube “I want to watch this”.

Side note: that’s how mainstream media is making every single one of us flat and single-minded. So you watched X? Onwards you shall see X nonstop.

I fucking hate this.

I got a new phone recently and wanted to watch some videos on recommend cases and screen protectors, and now 70% of my scrolling feed is related to this God damn phone.

I own it already youtube, I don’t need to see any more content on this phone, ffs

I had a similar case. I watch a video about a former jewle thif or bank rober analys the GTA5 Jewel store hist. After that I was getting recommended to watch every single one of his vlog where he was talk about how life in prison was. It went on for about a few weeks but it eventually stopped as I never clicked on them.

you can also go here: https://myactivity.google.com/product/youtube and set it to auto delete

Thanks, going to check that too.

I would not pause the history, i would populate the history with balanced contents and then (if you feel like your mother will actively look for radicalized content) pause it.

So that the algorithm will have something"balanced" to work on.

Re not acting immediately: per GDPR they can’t keep deleted data on your activity more than a few months (and probably they do before the deadline)

Delete also the search history. After that, search non toxic topics, like live shows of her favourite artists, recipes, nature documentaries…

Video likes have an effect on the algorithm. If she has lots of liked videos, delete them. Any playlists she made would influence the algorithm too.

Not sure if video feedback data gets used, but you can clear them too. I’m talking about those “What did you think of this video?”, “Please tell us more” questions.

Edit: I’m not entirely sure if there is an option to delete the “likes”. You might have to manually “unlike” them.

My mother‘s partner had some small success, on the one hand doing what you do already, unsubscribe from bad stuff and subscribe to some other stuff she might enjoy (nature channels, religious stuff which isn‘t so toxic, arts and crafts…) and also blocked some tabloid news on the router. On the other hand, he tried getting her outside more, they go on long hikes now and travel around a lot, plus he helped her find some hobbies (the arts and crafts one) and sits with her sometimes showing lots of interest and positive affirmations when she does that. Since religion is so important to her they also often go to (a not so toxic or cultish) church and church events and the choir and so on.

She‘s still into it to an extent, anti-vax will probably never change since she can’t trust doctors and hates needles and she still votes accordingly (which is far right in my country) too which is unfortunate, but she‘s calmed down a lot over it and isn‘t quite so radical and full of fear all the time.

Edit: oh and myself, I found out about a book “How to have impossible conversations” and the topics in there can be weird, but it helped me in staying somewhat calm when confronted with outlandish beliefs and not reinforce, though who knows how much that helps if anything (I think her partner contributed a LOT more than me).

Reading this makes me realize I’m not alone as I thought. My mother too has gone completely out of control in the last 3 years with every sort of plot theory (freemasonry, 5 families ruling the world, radical anti-vax, this Pope is not the real Pope, EU is to blame for anything, etc.). I manage to “agree to disagree” most of the time but it’s though sometimes… People, no matter their degree of education, love simple explanations to complex problems and an external scapegoat for their failures. These contents will always have an appeal.

Yeah you are not alone at all, there is an entire subreddit for this r/qanoncasualties and while my mother doesn‘t know it, a lot of this stuff comes from this Q thing. Like why else would a person like her in Austria care so much about Trump or Hillary Clinton or a guy like George Soros? Also a lot of it is thinly veiled borderline Nazi stuff (it‘s always the jews or other races or minority groups at fault), which is the next thing, she says she hates Nazis and yet they got her to vote far right and support all sorts of agendas. There is some mental trick happening there where “the left is the actual Nazis”.

Well she was always a bit weird with religion, aliens, wonder cures and other conspiracy stuff, but it really got turned towards these political aims in the last years. It feels malicious and it was distressing to me, though I‘m somewhat at peace with it now, the world is a crazy place and out of my control, I just try to do the best with what I can affect.

By now it is beyond apparent that corporations cannot be relied upon to regulate themselves. In the mindless dynamic they have set in motion, the mindless endgame is to keep us in a perpetual state of anguish.

How this type of vulgarly cynical content is not considered obscene and banned, is a failure of the highest magnitude.

youtube has a delete option that will wipe the recorded trend. then just watch a couple of videos and subscribe to some healthy stuff.

Very cool, had no idea it was that simple

https://support.google.com/youtube/answer/55759?hl=en#zippy=%2Cdelete-your-channel-permanently

when you delete your account it also deletes your history. I assume she isn’t a content creator so the rest won’t be concerning. The next time you login using your gmail it will just be like when you first logged into youtube.

As you plan on messing with her feed, I’d like to warn you that a sudden change in her recommendations could seem to her like the whole internet got censored and she can’t see the truth anymore. She would be cut off from a sense of community and a sense of having special inside knowledge, and that may make things worse rather than better.

My non-proffessional prediction is that she would get bored with nothing to worry about and start actively seeking out bad news to worry over.

I’d like to warn you that a sudden change in her recommendations could seem to her like the whole internet got censored and she can’t see the truth anymore.

This is exactly the response my neighbors have. “Things get censored, so that must mean that the gov must be hiding this info” It’s truly insane to see this happen

What you’re describing is a cult or psychosis. Stopping it is a good thing, not something to be worried about.

Well yeh but, the concern is unintended consequences which sounds entirely likely. It’s kind of fucked up to even be considering doing this to an adult, entirely entitled to their own choice of viewing habits, without their knowledge and by surreptitious use of their account and it’s only dubiously ethical because it’s an act of kindness against machine generated manipulation of a far more insidious nature and for far less than altruistic reasons.

You’d hardly want it to backfire after taking this step. By posing this question the OP obviously already considers the pseudo cult his Mum’s getting sucked in to be a bad thing so it doesn’t need further signalling but being sure to do it carefully with an eye on the actual effect is probably wise or the whole endeavour would be a waste and might push her further in to the waiting arms of lunatics and charlatans.

I agree that cult mindset or psychosis is what she has going on. But suddenly removing the source from her computer without her knowledge could backfire because she’s already so invested.

Cold turkey isn’t the best solution if you truly think that is where they’re at for exactly the reason they described. How don’t you get that?